The hard part of software was never the code.

I. The Inversion

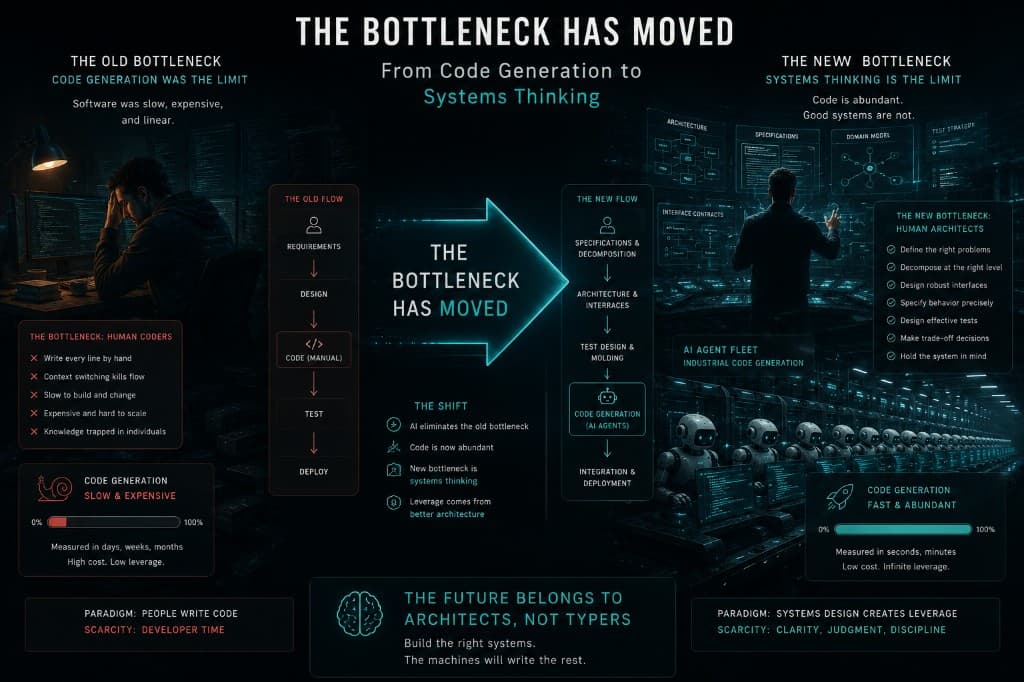

Everything you know about building software is organized around a bottleneck that no longer exists.

For sixty years, the central problem of software engineering has been the same. Translating human intent into working code is slow, expensive, and error prone. Every methodology invented since the 1960s, from Waterfall to Spiral to Agile to SAFe, has been a different answer to the same question. How do we manage the cost of writing code?

Waterfall tried to get it right the first time. Agile tried to get it right iteratively. Both assumed that writing code is where the work lives, and everything else, documentation, specification, testing, exists to support that central act.

That assumption is now false. AI agents can generate syntactically correct, functionally plausible code faster than a human can describe what they want. The cost of producing code has collapsed by orders of magnitude, and it continues to fall. A function that took an engineer an hour to write, test, and debug can be generated in seconds. A module that took a team a week can be produced by a fleet of agents in an afternoon.

Here is what has not collapsed. The cost of knowing what to build. The cost of understanding a domain deeply enough to specify its edge cases. The cost of designing interfaces that will survive contact with production. The cost of constructing tests that define correctness precisely enough that a machine can verify it. These upstream activities, specification, architecture, and test design, remain fundamentally human, cognitively expensive, and irreducibly complex.

The bottleneck has moved. The entire discipline must move with it.

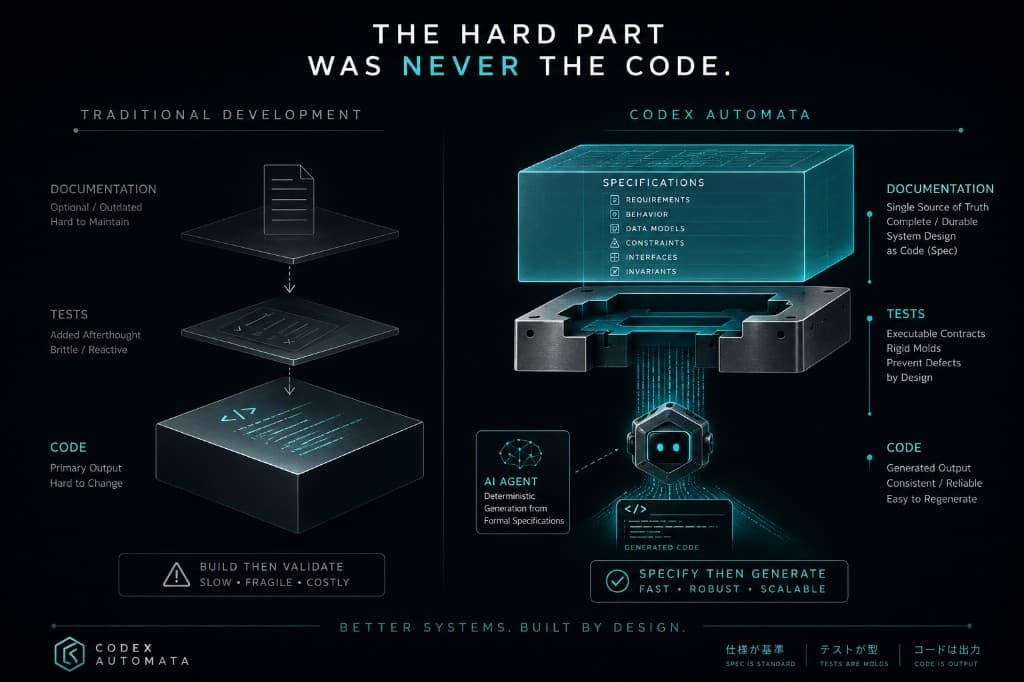

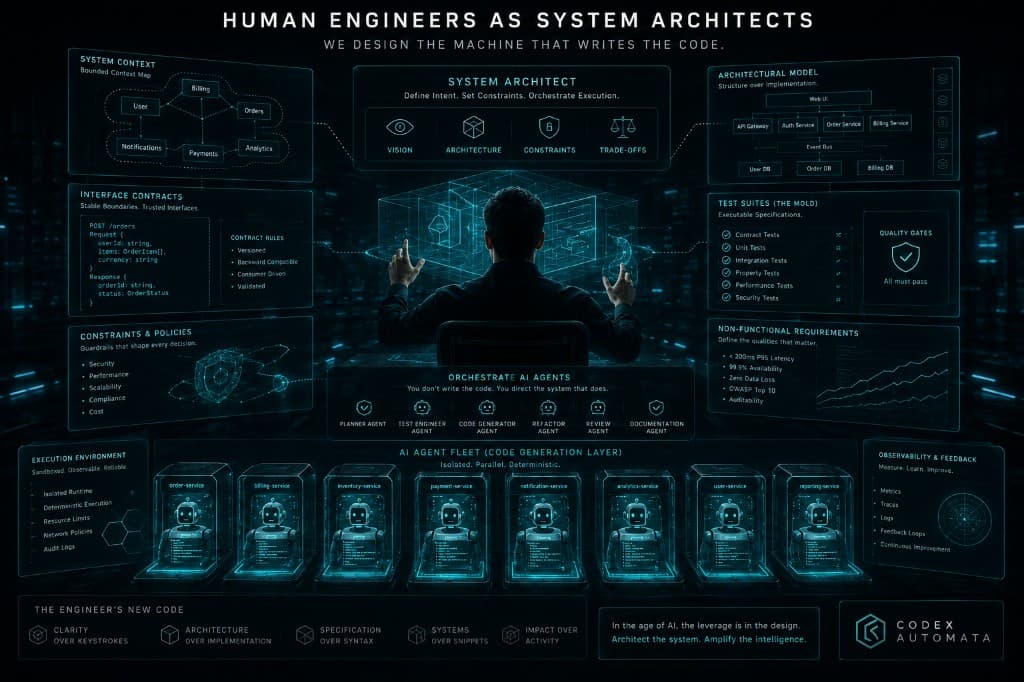

Codex Automata inverts the pipeline. The traditional sequence runs Code, then Tests, then Documentation, with docs treated as an afterthought and frequently abandoned. We reverse it entirely.

Documentation, Tests, Code

Documentation comes first. Before a single line of implementation exists, the system is specified. Its architecture, its interfaces, its data models, its failure modes, its edge cases. The specification is the primary engineering artifact. It is the thing that took real thought to produce.

Tests come second. Derived from the specification, they define the exact shape the code must take. Every behavior in the spec has a corresponding assertion. Every edge case has a corresponding test. The tests are precise, rigid, and complete. They are the mold.

Code comes last. An agent, or many agents working in parallel, receives the spec and the tests and writes implementation until every test passes. The code is a casting, a commodity artifact produced by pouring implementation into a prebuilt mold.

The economics of the moment demand this sequence. When code is cheap and specification is expensive, you optimize for specification. You put your best people, your deepest thinking, and your most rigorous process on the thing that is hardest to get right. Then you let machines handle the rest.

The hard part has always been the documentation.

II. The Mold

In industrial manufacturing, there is a clean separation between tooling and production. The mold is expensive. It is engineered with precision, machined to tight tolerances, tested and inspected before a single part is produced. The casting, the actual product, is cheap and repeatable. You pour metal into the mold, and the shape is predetermined. If the casting is defective, you do not blame the metal. You fix the mold.

This separation is the correct mental model for software in the agentic era.

Tests are the mold. Code is the casting.

This sounds like test driven development, and it shares DNA with Kent Beck's original insight. Write the test first, let failure guide design. Codex Automata takes it further. TDD, as commonly practiced, is a feedback loop between a developer writing a test and the same developer writing the code that passes it. It is a conversation a person has with themselves. The test and the code evolve together, often in the same session, shaped by the same mind's biases and blind spots.

When agents write the code, the dynamic changes completely. The test becomes a rigid constraint. It is a precise specification of acceptable behavior that a machine must satisfy. The test does not evolve with the code. The test stands firm, and the code must conform.

This distinction matters because it changes what tests need to be. In traditional TDD, a test can be somewhat loose. It captures the developer's intent well enough because the developer is also the implementer. In Codex Automata, tests must be sharp. They must overconstrain the solution space where behavior matters and leave freedom only where freedom is deliberate.

Without tests, an agent generates infinite variety. It can produce a dozen implementations of the same spec, each plausible, each slightly different, with no way to determine which is correct. The agent is simply unconstrained. Feed it a sharp mold, and the output snaps into a specific shape. The mold transforms code generation from an open ended creative act into a bounded search problem. Find an implementation such that all assertions pass.

Better constraints produce better results, faster.

It is important to remember that Toyota understood this fifty years ago. The Toyota Production System draws a hard line between process preparation and production execution. Enormous effort goes into designing jigs, fixtures, and tooling, the equivalent of our tests and specs. Production itself is fast, repeatable, and largely automated. When a defect appears on the line, the response is to fix the tooling, not tinker with the product. The concept of jidoka, stopping the line when quality fails, maps directly to CI/CD quality gates that halt promotion until the mold is corrected.

The implication for software teams is stark. The engineering lives in the mold. Your best engineers should be spending their time designing molds, writing specifications, deriving test cases, and thinking through edge cases. Implementation code that a machine can produce in seconds is no longer where the real work lives.

If code is wrong, do not debug the implementation. Fix the spec. Fix the tests. Recast.

III. The Anatomy of Good Work

There is a reason Unix conquered the world and the alternatives did not.

In the late 1960s, Ken Thompson and Dennis Ritchie at Bell Labs made a design choice that seemed, at the time, almost trivially obvious. Programs should do one thing well. They should communicate through simple, text based interfaces. They should compose through pipes. The result was an ecosystem of small, sharp tools that could be combined in ways their creators never anticipated. grep does not know about sort. sort does not know about uniq. But grep pattern file | sort | uniq -c does something none of them could do alone, and it does it reliably because each piece has one job and clear boundaries.

This is an engineering law. Small, independent components compose. Large, entangled components do not. The reason is mathematical. As a system grows, the number of potential interactions between its parts grows combinatorially. A system of ten tightly coupled components has 45 pairwise interactions. A system of one hundred has 4,950. Tight coupling makes each interaction a potential failure mode, a coordination burden, and a communication channel that must be maintained.

Loose coupling with clean interfaces collapses this explosion. Each component interacts with a small, stable surface area. Adding a new component does not require understanding all existing components, only the interfaces it touches.

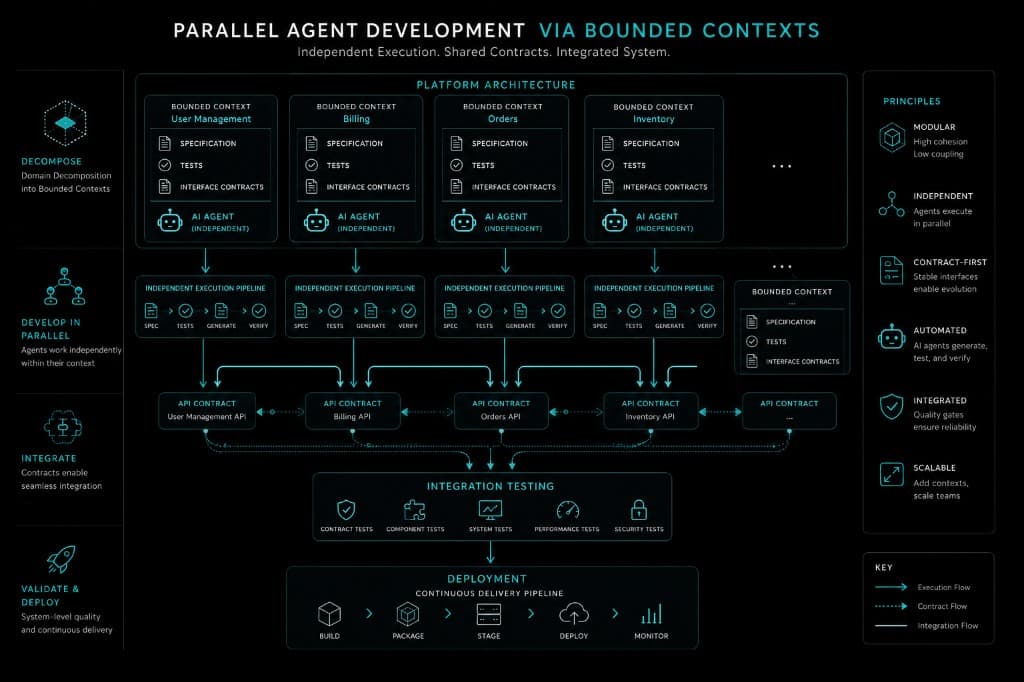

Eric Evans formalized this insight for software systems with Domain Driven Design. His bounded contexts are a pragmatic answer to a difficult question. How do you partition a complex domain so that each partition can be understood, built, and maintained independently? The answer is to draw boundaries where the domain model changes meaning, where the same word means different things to different parts of the system. Each bounded context gets its own language, its own model, its own truth.

In the agentic era, bounded contexts serve a second purpose that Evans could not have anticipated. They are the natural unit of parallel execution. Each bounded context can receive its own spec, its own tests, and its own agent. The agents do not need to communicate with each other because the boundaries ensure independence. The interfaces between contexts are defined in contracts, frozen before code exists, and verified by integration tests after the cast.

This is the insight that makes parallelization work. Modularity is the prerequisite for agentic development. Without clean boundaries, you cannot parallelize. Without parallelization, you are running agents sequentially, which means you have expensive infrastructure reproducing the same bottleneck you had with human developers. In this way you have changed nothing.

The corollary is equally important. Atomization is about making things small enough to be independently completable. A task that can be picked up by an agent, executed to completion against its own tests, and merged without coordinating with other agents, that is an atom. A task that requires mid flight coordination between agents, that is a molecule, and it will slow you down.

Brooks proved in 1975 that adding people to a late project makes it later. His reasoning was precise. Communication overhead scales with the square of the team size. Ten people generate 45 communication channels. A hundred generate 4,950. The project drowns in coordination.

Brooks was solving for the wrong variable. He assumed that every worker needs to communicate with every other worker. That is true when the specification is incomplete or ambiguous, when workers need to negotiate intent, resolve contradictions, and align on meaning in real time. It is false when the specification is complete. Ten agents reading the same spec create zero communication channels. A hundred agents reading the same spec still create zero communication channels. The spec is the communication. Brooks' Law holds for humans precisely because humans cannot perfectly externalize their mental models into shared documents. Codex Automata's entire philosophy is an attack on that limitation. Externalize everything into specs and tests, and the coordination cost approaches zero.

The implication is uncomfortable for engineers who take pride in writing code. The most valuable work you can do is decomposition. Carving a system into modules with clean interfaces, sharp specs, and comprehensive tests. That is the work that unlocks everything else. The implementation is what happens after the real engineering is done.

IV. The Economics of Forward

There is a deeply held instinct in software engineering that says build the minimal thing first. Start with a prototype. Get it working, then make it scale. Premature optimization is the root of all evil. Ship the simplest thing that could possibly work.

This instinct was correct when writing code was expensive. If generating a hundred lines of code cost an hour of human effort, you wanted to generate as few lines as possible. You optimized for minimal output. Build the small version, see if it works, then invest in the larger version only if the market demands it. The cost of code was the binding constraint.

When code generation costs near zero, the calculation inverts. The cost of generating scalable code is now negligible. The cost of retrofitting scalability into a tightly optimized minimal system is enormous. It requires rewriting specs, rewriting tests, rewriting interfaces, and recasting the entire module. You pay the full cost of the Codex Automata pipeline again, not because you are building something new, but because you are rebuilding something you already built wrong.

In the agentic era, the efficient strategy is to build at scale first and optimize later.

It is important to note that this is a recognition that the default should be generality, abstractions that accommodate growth, interfaces that anticipate extension, data models that handle volume, because the marginal cost of generating that generality is near zero, and the cost of not having it when you need it is a full architecture rework.

Google's engineering culture has operated this way for decades, though for different reasons. Monorepo practices, shared infrastructure, and aggressive standardization mean that every new service at Google inherits scalability patterns from day one. The reason is practical. Retrofitting it later is more expensive than including it from the start. SRE's error budgets are design constraints, not operational afterthoughts. The acceptable failure rate is specified before the service is built, and the architecture is designed to meet that target.

In Codex Automata, the same logic applies at the individual project level. You specify performance requirements in the spec. You write tests against those requirements. You build the pipeline with performance benchmarks from day one. The agent generates code that meets those constraints from the first cast. If profiling later reveals that certain paths are overprovisioned, you tighten, armed with data rather than intuition. Optimization becomes an evidence driven refinement activity instead of a frantic scramble when the system falls over under load.

The traditional scaling path, build minimal then rewrite, made sense when rewrites were cheaper than upfront investment. In the agentic era, upfront investment in specification is cheap and rewrites are expensive. The economics point forward.

V. The Flow

Scrum was designed for humans. Its core assumptions are biological.

Sprints assume that workers fatigue over a two week cycle and need a reset boundary. They assume that estimation is meaningful because the same humans will do the work they estimated. They assume that velocity stabilizes because teams learn and improve at a roughly steady pace. They assume that daily standups are necessary because humans forget context overnight and need synchronization rituals. They assume that retrospectives are necessary because humans do not naturally reflect on process without structured prompts.

None of these assumptions apply to AI agents. Agents do not fatigue. They do not estimate. They execute or they fail. Their velocity is a function of task complexity and model capability, not available hours. They do not lose context overnight. They do not need standups.

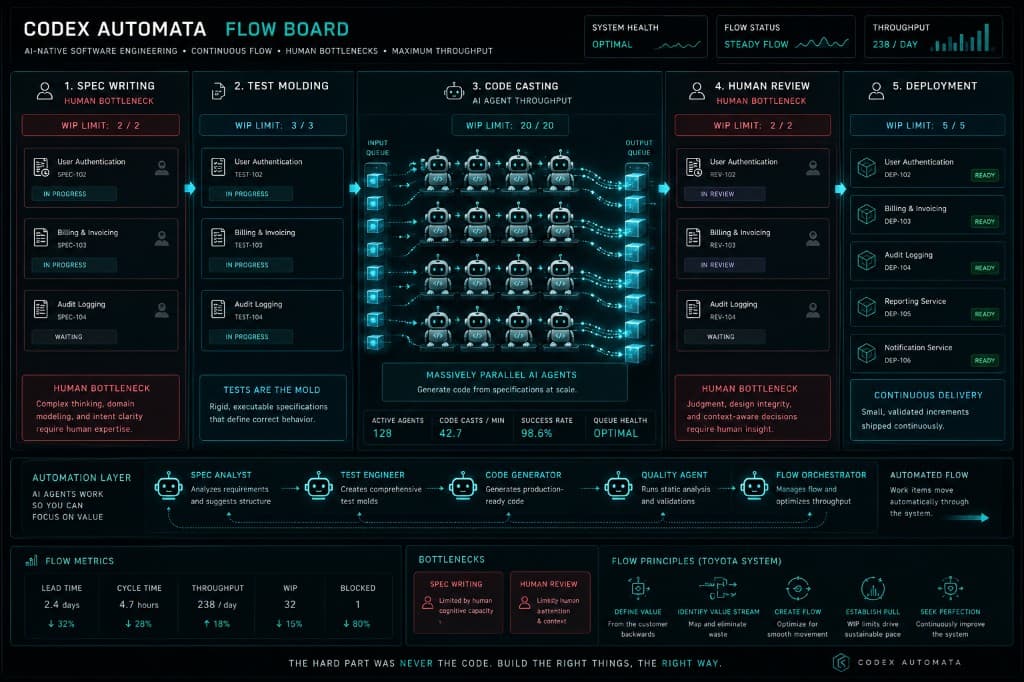

What agents need is a steady flow of well specified work, clear completion criteria, and automated quality gates. That is kanban.

Toyota's manufacturing philosophy never used sprints. It used continuous flow with pull based scheduling and work in progress limits. When a downstream station finishes processing a part, it pulls the next part from the upstream buffer. Nothing is pushed forward until the downstream station signals readiness. WIP limits prevent any station from accumulating inventory that exceeds its processing capacity. The result is smooth, predictable throughput with minimal waste.

This maps to agentic development with almost no translation needed.

The Spec Writing station produces specifications. It has a WIP limit, typically small because humans are the bottleneck here, and work only proceeds when a spec meets its exit criteria.

The Test Molding station consumes specs and produces tests. Multiple agents can work this station in parallel, but a WIP limit prevents an avalanche of untested specs from piling up.

The Code Casting station has no WIP limit. This is where parallelism lives. Every module with a complete mold can be cast simultaneously. Ten modules, ten agents, one afternoon.

The Review station has a WIP limit again. Human attention is finite. This is the second bottleneck, and the board makes it visible.

At this point one is likely wondering where the bottleneck actually lives in this system. The bottleneck is never Code Casting. It is always Spec Writing or Review. The board makes this obvious. If specs are piling up in the Backlog and Code Casting is empty, the system is starved of specification. If Code Casting output is piling up before Review, the system is starved of human attention. The board does not just track progress. It diagnoses the system.

CI/CD is the scaffolding that makes the entire flow work. The pipeline exists before the first line of code is written, configured during the Architecture phase alongside specs and interface contracts. It is as foundational as the rebar in a concrete building. Invisible in the finished product, essential to its structural integrity.

Quality gates in the pipeline encode the Codex Automata process itself. Does the code lint? Does every module have a spec? Does every spec have tests? Does the change respect module boundaries? Is the commit atomic? The pipeline enforces the process mechanically so that discipline does not depend on memory or willpower.

VI. The Machine

Picture the system running at full speed.

An engineer receives a feature request. He opens a conversation, with an agent, with the codebase, with the domain. What are the boundaries? What contracts will change? Where will the new behavior touch existing modules? The agent maps the terrain. The engineer supplies judgment. The output is a set of atomic work items, each with sharp boundaries, each independently completable.

He writes the spec. This is the hardest hour of his day. The difficulty is in the thinking. What should the system do at the boundary? What happens when the network drops mid transaction? What does "success" mean, precisely, in terms a machine can verify? The spec is challenged, revised, and frozen. It is the primary artifact, the thing that took real thought to produce.

An agent reads each spec and builds the mold. Unit tests, integration tests, contract tests. The tests compile. Every one fails. No implementation exists yet. The mold is ready.

Then the cast. Five modules. Five agents. Each receives its spec, its tests, and the interface contracts of its neighbors. They work in parallel, independently, silently. They do not communicate because they do not need to. The specs and contracts contain everything. One finishes in minutes. Another takes an hour. They are not synchronized. When each agent's tests pass, the module is done.

Automated gates fire. An agent reviews against the spec. A human reviews last, checking for coherence. Does the system still make sense as a whole? The pipeline runs. The code deploys. Monitoring confirms that production matches the spec. If it does not, the cycle restarts at the mold.

Remove any piece and the machine breaks. Without specs, agents hallucinate, producing plausible code aimed at the wrong problem. Without tests, infinite variety with no definition of correct. Without modularity, agents collide in merge conflicts, contradictions, and chaos. Without flow, work pools in the wrong places. Without CI/CD, the whole arrangement depends on discipline that humans will eventually forget and agents will never have.

The principles are load bearing members in a single structure, and each assumes the others are present. Specification creates the foundation. Tests constrain the shape. Modularity enables concurrency. Flow exposes bottlenecks. CI/CD enforces integrity. Remove one, and the structure does not degrade gracefully. It collapses.

This is why the methodology is called Codex Automata, the book of self moving things. The specifications, tests, gates, and pipelines form a machine that, once built, moves on its own. Agents fill the mold. The pipeline verifies the casting. The board reveals the flow. Humans design the machine and oversee its operation, but the machine runs.

The engineer's job is to build the machine that writes the code correctly.

VII. The Fracture Lines

Every methodology has a domain where it excels and a boundary where it breaks. Codex Automata is no different. Intellectual honesty requires mapping the fractures.

The methodology assumes that the problem can be specified before it is solved. This is true for the vast majority of production software. CRUD applications, data pipelines, API services, infrastructure tooling, enterprise systems. It is not always true for research. When a physicist is groping toward a new model, or a machine learning researcher is exploring whether an architecture can learn a task at all, the specification and the discovery are the same act. You cannot write tests for a hypothesis you have not yet formed. In these domains, exploratory code is the instrument of thought. Codex Automata applies after the exploration, when the shape of the solution is known and the task is to engineer it reliably.

Similarly, certain creative work resists upfront specification. A designer prototyping an interaction in code is not implementing a spec. He is using code as a sketching medium, where the "specification" is the felt experience of the interface, and it only exists in his judgment after he sees it. Applying rigid test first discipline to this kind of work would strangle it. The correct response is to let creative work be creative, then, once the design crystallizes, specify and test the result for production.

There is also the question of scale. A solo developer building a weekend project does not need a kanban board, formal specs, and CI/CD quality gates. The overhead would exceed the benefit. Codex Automata is designed for systems that matter. Systems that must be correct, must scale, must be maintained by more than one person or agent over time. For a throwaway script, write the script.

The deepest limitation is the cold start problem. Writing good specs requires domain knowledge, architectural taste, and the hard won intuition that comes from years of building systems that failed. You cannot spec what you do not understand. Junior engineers cannot be dropped into the Spec Writing station and expected to produce sharp molds. They must first learn what good looks like, which means, paradoxically, they may need to write bad code, debug it, and internalize the failure modes before they can specify well. The methodology does not eliminate the need for experience. It concentrates experience where it matters most.

None of these limitations invalidate the approach. They define its scope. Codex Automata is a methodology for engineering production systems in an era when implementation is cheap and specification is the binding constraint. Within that scope, it is precise.

VIII. The Engineer, Redefined

If agents write the code, what becomes of the engineer?

The question is understandable. For decades, engineering identity has been bound to implementation. We interview for coding ability. We promote for technical depth. We admire the developer who can hold an entire system in their head and produce elegant, efficient code from raw intent. That skill was genuinely rare and valuable when implementation was the bottleneck. It commanded respect because it was hard.

It is still hard. It is no longer scarce.

The shift is a promotion to the work that was always more important but rarely rewarded proportionally. The engineer who can decompose a complex domain into clean modules with precise contracts has always been more valuable than the engineer who can implement one of those modules quickly. We just could not afford to acknowledge it, because we needed both, and the implementers outnumbered the architects by necessity.

When implementation is automated, the ratio inverts. The scarce, valuable skill becomes defining exactly what a function must do, in what contexts, with what failure modes, at what performance envelope, and proving it with tests before a line of code exists. That is specification. It is a higher order skill than implementation, and it requires everything implementation requires, deep technical knowledge, systems thinking, an internalized model of how software behaves, plus the ability to externalize that knowledge into artifacts precise enough for a machine to act on.

The new engineering skillset is domain modeling, constraint design, interface architecture, and test derivation. It is knowing what questions to ask about a system before building it. It is understanding failure modes well enough to specify them in advance. It is the ability to think in contracts, in invariants, in boundary conditions, and to express that thinking in documentation and tests that leave no room for misinterpretation.

This is harder, more technical work that has always existed but was often skipped because the pressure to ship code pushed engineers past the thinking and into the typing. Codex Automata removes that pressure. When agents handle the typing, engineers can finally do the thinking that the industry has always claimed mattered most but rarely made time for.

The engineer of the agentic era does not write less. He writes differently. Specifications instead of implementations, constraints instead of solutions, molds instead of castings. His output is more durable, more leveraged, and harder to produce than the code it generates.

Coda

The giants of software engineering, Beck, Brooks, Martin, Evans, Fowler, the engineers at Bell Labs, NASA, Toyota, and Google, spent decades articulating the principles that Codex Automata codifies. Write the test first. Maintain conceptual integrity. Keep components small and composable. Separate concerns. Limit work in progress. Integrate continuously. Specify before you build.

They were right about all of it. For decades, the industry adopted these principles imperfectly because the discipline they demanded was expensive in human time and attention. It was faster to skip the spec. It was easier to write the test after. It was more exciting to write code than documentation. The principles were honored in conference talks and violated in commit logs.

AI agents do not change the principles. They change the cost of following them. When implementation is handled by machines, the spec becomes the main deliverable. Tests become the mechanism that makes production reliable. Modularity becomes the structural requirement for running ten agents in parallel. The discipline that engineers could never quite afford is now the thing they cannot afford to skip.

The principles were always right. The economics finally agree.

Build the mold. The rest is casting.

Codex Automata v1.0, May 2026

Get the harness on GitHub